clickhousedb_fdw is another PostgreSQL’s Foreign Data Wrapper For ClickHouse, bases on unixodbc.

The clickhousedb_fdw is open-source. It is a Foreign Data Wrapper (FDW) for one of the fastest column store databases, Clickhouse. This FDW allows you to SELECT from, and INSERT into, a ClickHouse database from within a PostgreSQL v11 server.

The FDW supports advanced features like aggregate pushdown and joins pushdown. These significantly improve performance by utilizing the remote server’s resources for these resource intensive operations.

To simplify the job, I use PostgreSQL 11 Docker, it bases on Debian 9.8.

Installing Prerequisites

sudo apt-get install devscripts build-essential cmake unixodbc-dev postgresql-server-dev-11

Dowload and compile clickhouse-odbc

git clone --recursive https://github.com/yandex/clickhouse-odbc

mkdir -p build; cd build && cmake .. && make -j $(nproc || sysctl -n hw.ncpu || echo 4)

make install

Clone clickhousedb_fdw source code and build

git clone https://github.com/Percona-Lab/clickhousedb_fdw

cd clickhousedb_fdw

make USE_PGXS=1

make USE_PGXS=1 install

If you meet the error, means you need install unixodbc-dev first,

In file included from clickhousedb_fdw.c:17:0:

lib/clickhouse-client.h:22:22: fatal error: sqltypes.h: No such file or directory

#include <sqltypes.h>

^

compilation terminated.

sudo apt-get install unixodbc-dev

another issue,

/usr/bin/ld: cannot find -lclickhouse-1.0

It is bug of Makefile, you need run Makefile under lib directory first,

make -f lib/Makefile

Install clickhouse

In order to test the environment, I install both clickhouse-server and clickhouse-client too.

sudo apt-get install dirmngr # optional

sudo apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv E0C56BD4 # optional

​

echo "deb http://repo.yandex.ru/clickhouse/deb/stable/ main/" | sudo tee /etc/apt/sources.list.d/clickhouse.list

sudo apt-get update

​

sudo apt-get install -y clickhouse-server clickhouse-client

​

sudo service clickhouse-server start

#test it

clickhouse-client

clickhouse side

prepare data(create table and import data)

CREATE DATABASE test_database;

USE test_database;

CREATE TABLE tax_bills_nyc

(

bbl Int64,

owner_name String,

address String,

tax_class String,

tax_rate String,

emv Float64,

tbea Float64,

bav Float64,

tba String,

property_tax String,

condonumber String,

condo String,

insertion_date DateTime MATERIALIZED now()

)

ENGINE = MergeTree PARTITION BY tax_class ORDER BY (owner_name)

curl -X GET 'http://taxbills.nyc/tax_bills_june15_bbls.csv' | clickhouse-client --input_format_allow_errors_num=10 --query="INSERT INTO test_database.tax_bills_nyc FORMAT CSV"

​

CREATE TABLE tax_bills

(

bbl bigint,

owner_name text

)

ENGINE = MergeTree

PARTITION BY bbl

ORDER BY bbl;

btw, I suggest download csv first,

curl -X GET 'http://taxbills.nyc/tax_bills_june15_bbls.csv' -O

cat tax_bills_june15_bbls.csv | clickhouse-client --input_format_allow_errors_num=10 --query="INSERT INTO test_database.tax_bills_nyc FORMAT CSV"

PostgreSQL side

Now the data is ready in the ClickHouse, the next step is to set up the PostgreSQL side.

Install clickhousedb_fdw extension first,

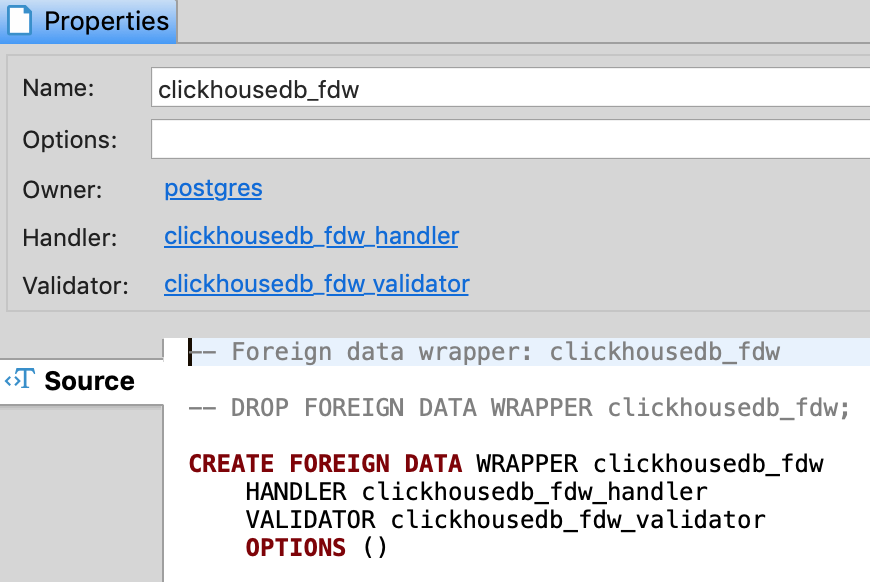

CREATE EXTENSION clickhousedb_fdw;

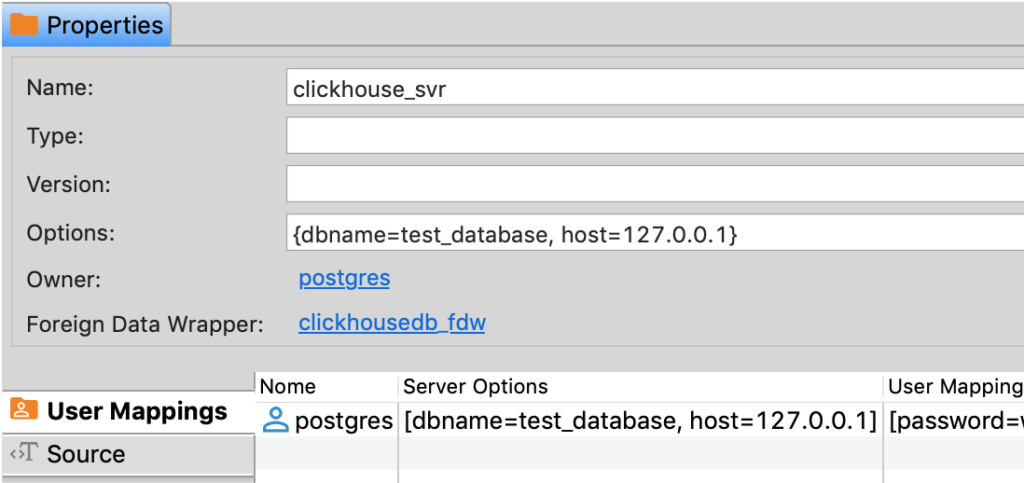

Then create a ClickHouse foreign server, user mapping, foreign tables and test it.

​

CREATE SERVER clickhouse_svr FOREIGN DATA WRAPPER clickhousedb_fdw OPTIONS(dbname 'test_database', driver '/home/vagrant/percona/clickhousedb_fdw/lib/clickhouse-odbc/driver/libclickhouseodbc.so', host '127.0.0.1');

​

CREATE USER MAPPING FOR CURRENT_USER SERVER clickhouse_svr;

postgres=# CREATE FOREIGN TABLE tax_bills_nyc

(

bbl int8,

owner_name text,

address text,

tax_class text,

tax_rate text,

emv Float,

tbea Float,

bav Float,

tba text,

property_tax text,

condonumber text,

condo text,

insertion_date Time

) SERVER clickhouse_svr;

​

postgres=# SELECT bbl,tbea,bav,insertion_date FROM tax_bills_nyc LIMIT 5;

bbl | tbea | bav | insertion_date

------------+-------+--------+----------------

4001940057 | 18755 | 145899 | 15:25:42

1016830130 | 2216 | 17238 | 15:25:42

4012850059 | 69562 | 541125 | 15:25:42

1006130061 | 55883 | 434719 | 15:25:42

3033540009 | 33100 | 257490 | 15:25:42

(5 rows)

​

CREATE TABLE tax_bills ( bbl bigint, owner_name text) ENGINE = MergeTree PARTITION BY bbl ORDER BY (bbl)

​

INSERT INTO tax_bills SELECT bbl, tbea from tax_bills_nyc LIMIT 100;

​

EXPLAIN VERBOSE SELECT bbl,tbea,bav,insertion_date FROM tax_bills_nyc LIMIT 5;

QUERY PLAN

--------------------------------------------------------------------------------------------

Limit (cost=0.00..0.00 rows=1 width=32)

Output: bbl, tbea, bav, insertion_date

-> Foreign Scan on public.tax_bills_nyc (cost=0.00..0.00 rows=0 width=32)

Output: bbl, tbea, bav, insertion_date

Remote SQL: SELECT bbl, tbea, bav, insertion_date FROM test_database.tax_bills_nyc

(5 rows)

Aggregate Pushdown.

Aggregate pushdown is a new feature of PostgreSQL FDW. There are currently very few foreign data wrappers that support aggregate pushdown: clickhousedb_fdw is one of them. Planner decides which aggregate to pushdown or not. Here is an example for both cases.

postgres=# EXPLAIN VERBOSE SELECT count(bbl) FROM tax_bills_nyc LIMIT 5;

QUERY PLAN

---------------------------------------------------------------------------

Limit (cost=0.00..0.01 rows=1 width=8)

Output: (count(bbl))

-> Aggregate (cost=0.00..0.01 rows=1 width=8)

Output: count(bbl)

-> Foreign Scan on public.tax_bills_nyc (cost=0.00..0.00 rows=0 width=8)

Output: bbl, owner_name, address, tax_class, tax_rate, emv, tbea, bav, tba, property_tax, condonumber, condo, insertion_date

Remote SQL: SELECT bbl FROM test_database.tax_bills_nyc

(7 rows)

​

​

EXPLAIN VERBOSE SELECT count(bbl) FROM tax_bills_nyc;

QUERY PLAN

------------------------------------------------------------------

Foreign Scan (cost=1.00..-1.00 rows=1000 width=8)

Output: (count(bbl))

Relations: Aggregate on (tax_bills_nyc)

Remote SQL: SELECT count(bbl) FROM test_database.tax_bills_nyc

(4 rows)

Join Pushdown

Join pushdown is also a very new feature of PostgreSQL FDW’s. The clickhousedb_fdw also supports join pushdown.

EXPLAIN VERBOSE SELECT t2.bbl, t2.owner_name, t1.bav FROM tax_bills_nyc t1 RIGHT OUTER JOIN tax_bills t2 ON (t1.bbl = t2.bbl);

QUERY PLAN

​

-------------------------------------------------------------------------------------------------------------------------------------------------------

----

Foreign Scan (cost=1.00..-1.00 rows=1000 width=50)

Output: t2.bbl, t2.owner_name, t1.bav

Relations: (tax_bills t2) LEFT JOIN (tax_bills_nyc t1)

Remote SQL: SELECT r2.bbl, r2.owner_name, r1.bav FROM test_database.tax_bills r2 ALL LEFT JOIN test_database.tax_bills_nyc r1 ON (((r1.bbl = r2.bbl

)))

(4 rows)

Some screen shot

1 thought on “how to compile and install clickhousedb_fdw”